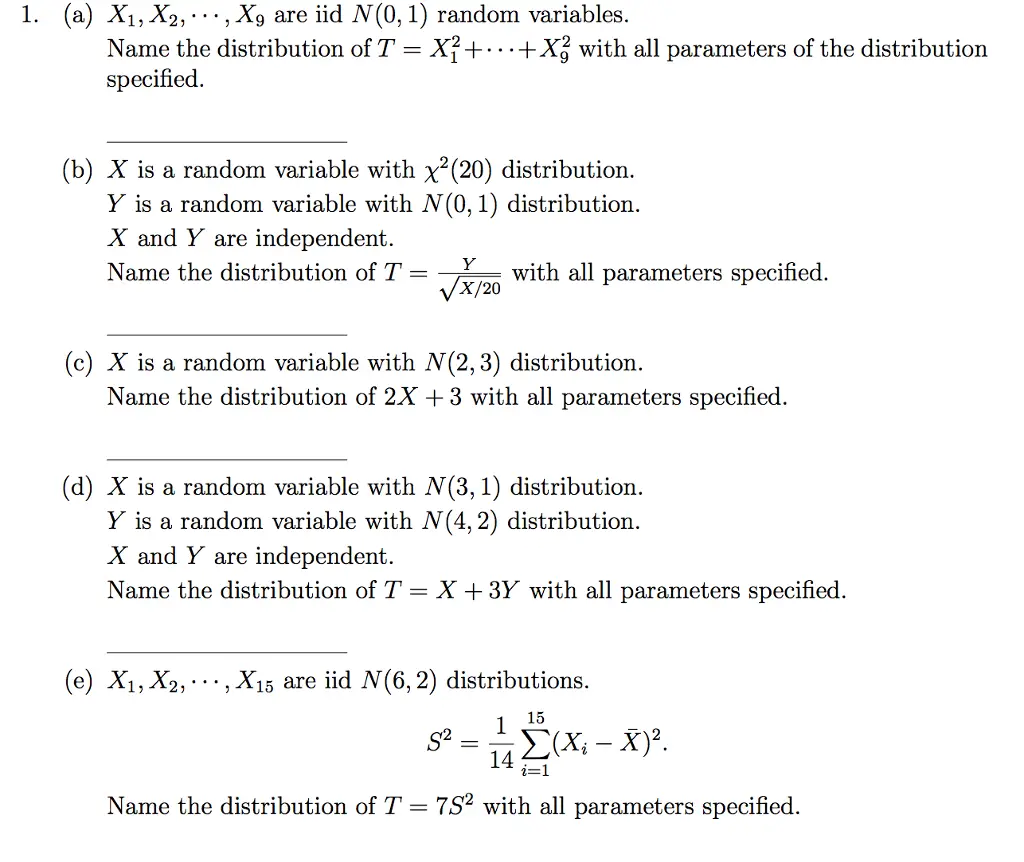

What Is The Eligibility Criteria For This Data Science Certification Program

ThisData Science certification program requires the following qualifications:

You Are Given A Dataset On Cancer Detection You Have Built A Classification Model And Achieved An Accuracy Of 96 Percent Why Shouldn’t You Be Happy With Your Model Performance What Can You Do About It

Cancer detection results in imbalanced data. In an imbalanced dataset, accuracy should not be based as a measure of performance. It is important to focus on the remaining four percent, which represents the patients who were wrongly diagnosed. Early diagnosis is crucial when it comes to cancer detection, and can greatly improve a patient’s prognosis.

Hence, to evaluate model performance, we should use Sensitivity , Specificity , F measure to determine the class wise performance of the classifier.

What Are The Types Of Biases That We Encounter While Sampling

Sampling biases are errors that occur when taking a small sample of data from a large population as the representation in statistical analysis. There are three types of biases:

- The selection bias

- The survivorship bias

- The undercoverage bias

Next up on this top Statistics Interview Questions and answers blog, let us take a look at the advanced set of questions.

Don’t Miss: What To Look For In A Candidate During An Interview

What Are The Assumptions Required For A Linear Regression

There are four major assumptions.

1. There is a linear relationship between the dependent variables and the regressors, meaning the model you are creating actually fits the data.

2. The errors or residuals of the data are normally distributed and independent from each other. 3. There is minimal multicollinearity between explanatory variables

4. Homoscedasticitythe variance around the regression lineis the same for all values of the predictor variable.

How Would You Approach A Dataset Thats Missing More Than 30 Percent Of Its Values

The approach will depend on the size of the dataset. If it is a large dataset, then the quickest method would be to simply remove the rows containing the missing values. Since the dataset is large, this wont affect the ability of the model to produce results.

If the dataset is small, then it is not practical to simply eliminate the values. In that case, it is better to calculate the mean or mode of that particular feature and input that value where there are missing entries.

Another approach would be to use a machine learning algorithm to predict the missing values. This can yield accurate results unless there are entries with a very high variance from the rest of the dataset.

You May Like: What To Wear To An Interview In The Summer

How Do You Stay Up

This is a commonly asked question in a statistics interview. Here, the interviewer is trying to assess your interest and ability to find out and learn new things efficiently. Do talk about how you plan to learn new concepts and make sure to elaborate on how you practically implemented them while learning.

If you are looking forward to learning and mastering all of the Data Analytics and Data Science concepts and earn a certification in the same, do take a look at Intellipaatâs latest Data Science with R Certification offerings.

Polish Up Your Programming Skills

As discussed earlier, youll likely face a programming task. Ensure youre up to speed with your preferred programming languagewhether Python, R, Java, or anotherand get plenty of practice before you get to the interview itself. Practice regularly by writing code and solving challenges or studying code written by experienced developers. If youre not confident with your programming skills, you could attend a bootcamp or participate in online forums such asStack Overflow.

Also Check: What To Say In An Interview Thank You Email

What Are The Essential Functions And Responsibilities Of A Data Scientist

A Data Scientist identifies the business issues that need to be answered and then develops and tests new algorithms for quicker and more accurate data analytics utilizing a range of technologies such as Tableau, Python, Hive, and others. A Data Scientist also collects, integrates, and analyses data to acquire insights and reduce data issues so that strategies and prediction models may be developed.

How To Learn Data Science

- Who should learn Data Science?

If you have the passion and knack for Data Science, that is all that is required. You must be enthusiastic about the tools and techniques that are essential in this domain. If you are good at mathematics, statistics, any programming language, or any data visualization tool, you will quickly master the concepts.

- How can I start learning Data Science and become a master in it?

The first thing to do is get familiar with all the concepts, applications, various tools, and techniques of Data Science. Consistency in learning and practice is the only way to stay updated and relevant in the Data Science world.

- How do I learn Data Science online?

You can check out all the online Data Science courses that Intellipaat offers. You will also find various tutorials, blogs, interview guides, and community forums to aid your learning.

These courses will help working professionals master this technology without interrupting work hours. The curriculum of these online programs meets industry requirements and standards.

- What are the best learning paths for Data Science?

All kinds of degrees and certifications related to Data Science is a good career path in Data Science as you will get the opportunity to gain proficiency in this technology. However, before finalizing, make sure to learn whether the program and faculty are suitable for your learning style.

It is better to opt for an online course if you are already working and want to learn while you earn.

Read Also: What Is Behavioral Based Interviewing

What Is The Meaning Of An Inlier

An inlier is a data point that lies at the same level as the rest of the dataset. Finding an inlier in the dataset is difficult when compared to an outlier as it requires external data to do so. Inliers, similar to outliers reduce model accuracy. Hence, even they are removed when theyâre found in the data. This is done mainly to maintain model accuracy at all times.

What Is The Roc Curve

The graph between the True Positive Rate on the y-axis and the False Positive Rate on the x-axis is called the ROC curve and is used in binary classification.

The False Positive Rate is calculated by taking the ratio between False Positives and the total number of negative samples, and the True Positive Rate is calculated by taking the ratio between True Positives and the total number of positive samples.

In order to construct the ROC curve, the TPR and FPR values are plotted on multiple threshold values. The area range under the ROC curve has a range between 0 and 1. A completely random model, which is represented by a straight line, has a 0.5 ROC. The amount of deviation a ROC has from this straight line denotes the efficiency of the model.

The image above denotes a ROC curve example.

Free Course: Introduction to Data Science

Read Also: Interview Questions For Payroll Coordinator

Q24 What Do You Understand By The Term Normal Distribution

Data is usually distributed in different ways with a bias to the left or to the right or it can all be jumbled up.

However, there are chances that data is distributed around a central value without any bias to the left or right and reaches normal distribution in the form of a bell-shaped curve.

Figure: Normal distribution in a bell curve

The random variables are distributed in the form of a symmetrical, bell-shaped curve.

Properties of Normal Distribution are as follows

Unimodal -one mode

Symmetrical -left and right halves are mirror images

Bell-shaped -maximum height at the mean

Mean, Mode, and Median are all located in the center

What Is Observational And Experimental Data In Statistics

Observational data correlates to the data that is obtained from observational studies, where variables are observed to see if there is any correlation between them.

Experimental data is derived from experimental studies, where certain variables are held constant to see if any discrepancy is raised in the working.

You May Like: What To Say About Yourself In An Interview

Q106 Explain Gradient Descent

To Understand Gradient Descent, Lets understand what is a Gradient first.

A gradient measures how much the output of a function changes if you change the inputs a little bit. It simply measures the change in all weights with regard to the change in error. You can also think of a gradient as the slope of a function.

Gradient Descent can be thought of climbing down to the bottom of a valley, instead of climbing up a hill. This is because it is a minimization algorithm that minimizes a given function .

Q89 What Is The Difference Between Machine Learning And Deep Learning

Machine learning is a field of computer science that gives computers the ability to learn without being explicitly programmed. Machine learning can be categorised in the following three categories.

Supervised machine learning,

Unsupervised machine learning,

Reinforcement learning

Deep Learning is a subfield of machine learning concerned with algorithms inspired by the structure and function of the brain called artificial neural networks.

Don’t Miss: How To Prepare For An Interview For A Teaching Position

Best Data Science Certifications To Do In 2022

Data scientists are among the most sought-after IT professionals. Data specialists that are able to keep track of the huge volume of data collected by an organization are becoming an increasingly desirable asset for businesses. Certification is something to consider if you are interested in entering this affluent sector or if you want to differentiate yourself from the other candidates in the area.

If you’re looking to get into the data science field, obtaining a Data Science Certification will help you gain in-demand skills as well as establish your expertise to potential employers. Have a look at our rundown of the top Data Science Certifications you can get in 2022.

List Of Best Data Science Certifications

Data Scientist Course

Taking this IBM-sponsored Data Science course is a great way to jump-start your Data Science career while also receiving the top-notch support and learning you need. This course provides in-depth instruction on some of the most in-demand Data Analytics and Machine Learning abilities, as well as hands-on experience with some of the most important tools and techniques, such as Python, Tableau, R, and the fundamental ideas behind machine learning.

Master the intricacies of data analysis and interpretation, Machine Learning, and robust programming abilities to further your Data Science career with this Data Scientist course from Simplilearn.

-

Data Science with Python Certification

-

Certification Course in Data Science with R

-

Data Science Certification

Independent And Dependent Events

In probability, an event can be said as an independent event if the probability of one event to occur doesnt affect the probability of another event to occur.

The most common example of independent events is throwing two different dice or tossing a coin several times. When we toss a coin, the probability of us getting a tail in the second toss wouldnt be affected by the result that we got from the first toss. The probability of us getting a tail will always be 0.5.

Meanwhile, an event can be said as a dependent event if the probability of one event to occur affects the probability of another event to occur.

An example of a dependent event is drawing cards from a deck of cards. Lets say we want to know the probability of us getting a red heart from a deck of cards. If you havent drawn a card from the deck before, then the probability of you getting a red heart would be 13/52. Lets say that you got a black spade in the first draw. Then, the probability of you getting a red heart in the second draw is no longer 13/52, but 13/51 because you have drawn one card from the deck.

Below are the examples of data science interview questions from various companies that will test our knowledge in dependent and independent events:

Question from :

What is the probability of drawing two cards that have the same suite?

This is an example of a dependent event. The probability that two events will occur in the case of dependent event can be defined as:

Question from :

Question from :

Read Also: How To Prepare For Initial Phone Interview

Imagine That Jeremy Took Part In An Examination The Test Is Having A Mean Score Of 160 And It Has A Standard Deviation Of 15 If Jeremys Z

To determine the solution to the problem, the following formula is used:

X = μ + ZÏHere:μ: MeanÏ: Standard deviationX: Value to be calculatedTherefore, X = 160 + = 173.8

If you are looking forward to becoming an expert in Statistics and Data Analytics, make sure to check out Intellipaatâs online Data Analyst Course program.

What Is A Linear Regression Model List Its Drawbacks

A linear regression model is a model in which there is a linear relationship between the dependent and independent variables.

Here are the drawbacks of linear regression:

- Only the mean of the dependent variable is taken into consideration.

- It assumes that the data is independent.

- The method is sensitive to outlier data values.

Don’t Miss: How To Call References After Interview

Top Categories For Data Science Interview Questions

Data Science is an interdisciplinary field and sits at the intersection of computer science, statistics/mathematics, and domain knowledge. To be able to perform well, one needs to have a good foundation in not one but multiple fields, and it is reflected in the interview. Weve divided the questions into 6 categories:

- Machine Learning

- Experiential/Behavioral Questions

Weve also provided brief answers and key concepts for each question. Once youve gone through all the questions, youll have a good understanding of how well youre prepared for your next data science interview!

What Is The Salary Of A Data Science Expert In Chicago

A Data Science Expert’s average pay in Chicago is around $91,430/year. Salary often changes based on a person’s experience and skill level, as well as other factors such as economic situations. Taking a good Data Science certification in Chicago may give you the necessary knowledge and abilities to become a Data Scientist.

Also Check: How To Ace Video Conference Interview

Q: Give Me 3 Types Of Statistical Biases And Explain Each Of Them With An Example

- Sampling bias refers to a biased sample caused by non-random sampling.To give an example, imagine that there are 10 people in a room and you ask if they prefer grapes or bananas. If you only surveyed the three females and concluded that the majority of people like grapes, youd have demonstrated sampling bias.

- Confirmation bias: the tendency to favour information that confirms ones beliefs.

- Survivorship bias: the phenomenon where only those that survived a long process are included or excluded in an analysis, thus creating a biased sample.

Q64 Explain Svm Algorithm In Detail

SVM stands for support vector machine, it is a supervised machine learning algorithm which can be used for both Regression and Classification. If you have n features in your training data set, SVM tries to plot it in n-dimensional space with the value of each feature being the value of a particular coordinate. SVM uses hyperplanes to separate out different classes based on the provided kernel function.

Recommended Reading: What Questions Should I Ask During An Interview

Recommended Reading: How To Nail An Interview

Q90 What In Your Opinion Is The Reason For The Popularity Of Deep Learning In Recent Times

Now although Deep Learning has been around for many years, the major breakthroughs from these techniques came just in recent years. This is because of two main reasons:

-

The increase in the amount of data generated through various sources

-

The growth in hardware resources required to run these models

GPUs are multiple times faster and they help us build bigger and deeper deep learning models in comparatively less time than we required previously.

Q91. Explain Neural Network Fundamentals

A neural network in data science aims to imitate a human brain neuron, where different neurons combine together and perform a task. It learns the generalizations or patterns from data and uses this knowledge to predict output for new data, without any human intervention.

The simplest neural network can be a perceptron. It contains a single neuron, which performs the 2 operations, a weighted sum of all the inputs, and an activation function.

More complicated neural networks consist of the following 3 layers-

The figure below shows a neural network-

Why Is R Used In Data Visualization

R is widely used in Data Visualizations for the following reasons-

- We can create almost any type of graph using R.

- R has multiple libraries like lattice, ggplot2, leaflet, etc., and so many inbuilt functions as well.

- It is easier to customize graphics in R compared to Python.

- R is used in feature engineering and in exploratory data analysis as well.

Read Also: How To Email An Employer After An Interview

Q14 What Big Data Tools Have You Used

Answer: While all data analysts use data management tools at some point, make sure you consider the context here. Specifically, what tools have you usedor are at least familiar withthat are common in a machine learning setting? Common tools used for machine learning include big data tools, like Apache Hadoop, Apache Spark, and NoSQL databases. These tools, used for distributed computing, are necessary for managing big data and real-time web applications. Apache Spark is arguably the most popular right now.

Spark is a powerful open-source processing engine built for speed, ease of use, and sophisticated analytics. Its used for various machine learning tasks, such as classification, regression, clustering, and dimensionality reduction. If youve never used any of these tools, be honest. But try to familiarize yourself with them before the interview, so at least you dont have to give the interviewer a blank expression if they ask you!