Statistics Interview Questions To Prep For Your Interview

In this article

At some point in the data science job interview process, youre going to have to sit down for a technical interview. And chances are that youll be asked a few questions about statistics, given that statistics is both foundational to the field of data science and because data science use statistics frequently and on a daily basis.

This interview can be one of the most daunting parts of the entire interview process. With a portfolio, you can spend as much as you have tinkering with the projects that show off your best work. With an interview, you have to be able to think on your feet.

If that sounds anxiety-inducing, then youre in the right place. Below, weve detailed thirty of the most common statistics interview questions that data science candidates get asked. Study these questions, and youll be well on your way to acing the interview and landing your dream job.

Q: You Are Compiling A Report For User Content Uploaded Every Month And Notice A Spike In Uploads In October In Particular A Spike In Picture Uploads What Might You Think Is The Cause Of This And How Would You Test It

There are a number of potential reasons for a spike in photo uploads:

The method of testing depends on the cause of the spike, but you would conduct hypothesis testing to determine if the inferred cause is the actual cause.

How Are Univariate Bivariate And Multivariate Analyses Different From Each Other

|

Univariate Analysis |

Bivariate Analysis |

Multivariate Analysis |

|

When only one variable is being analyzed through graphs like pie charts, the analysis is called univariate. |

When trends in two variables are compared using graphs like scatter plots, the analysis of the bivariate type. |

When more than two variables are considered for analysis to understand their correlations, the analysis is termed as multivariate. |

Also Check: Creative Interview Questions For Administrative Assistant

What Is A Normal Distribution

Normal Distribution is also known as the Gaussian Distribution. The normal distribution shows the data near the mean and the frequency of that particular data. When represented in graphical form, normal distribution appears like a bell curve. The parameters included in the normal distribution are Mean, Standard Deviation, Median etc.

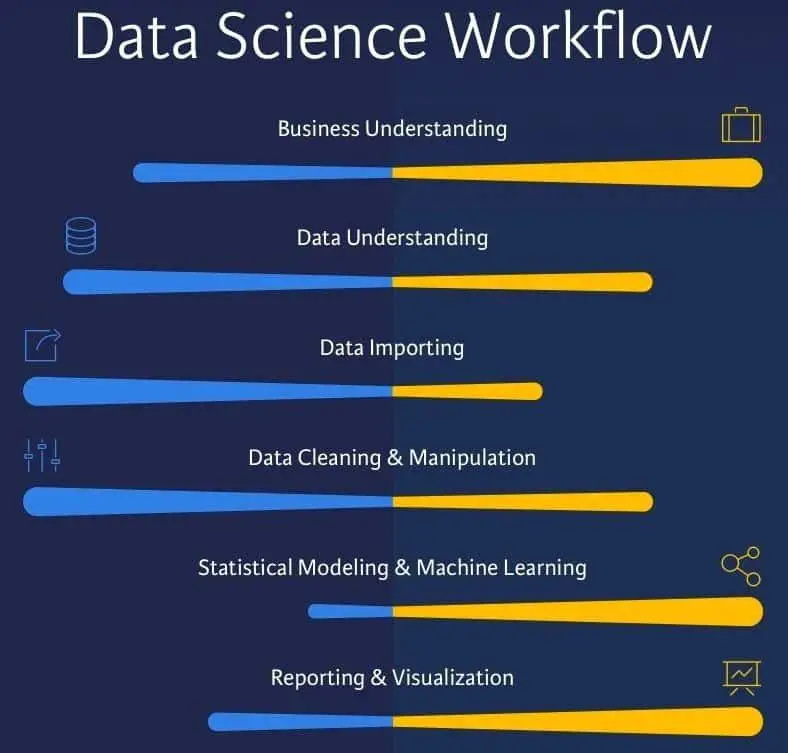

Explain The Differences Between Big Data And Data Science

Data science is an interdisciplinary field that looks at analytical aspects of data and involves statistics, data mining, and machine learning principles. Data scientists use these principles to obtain accurate predictions from raw data. Big data works with a large collection of data sets and aims to solve problems pertaining to data management and handling for informed decision-making.

Also Check: Interview Questions And Answers For Talent Acquisition Specialist

Q2 How Is The Statistical Significance Of An Insight Assessed

To find out the statistical significance of an insight, hypothesis testing is used. In this, the alternate hypothesis and null hypothesis are stated and then the p-value is calculated. Once we calculate the p-value, the null hypothesis is assumed true, and the values are determined. To get accurate results, the alpha value is tweaked. After this, if p-value is lesser than the alpha value, the null hypothesis is rejected.

What Is The Admission Process For This Data Science Certification

There are three manageable phases to the Data Science Certification admission:

You May Like: What To Ask Your Interviewer

Faqs On Data Scientist Interview Questions

1. What type of questions are asked in a data scientist interview?

Data science interview questions are usually based on statistics, coding, probability, quantitative aptitude, and data science fundamentals.

2. Are coding questions asked at data scientist interviews?

Yes. In addition to core data science questions, you can also expect easy to medium Leetcode problems or Python-based data manipulation problems. Your knowledge of SQL will also be tested through coding questions.

3. Are behavioral questions asked at data scientist interviews?

Yes. Behavioral questions help hiring managers understand if you are a good fit for the role and company culture. You can expect a few behavioral questions during the data scientist interview.

4. What topics should I prepare to answer data scientist interview questions?

Some domain-specific topics that you must prepare include SQL, probability and statistics, distributions, hypothesis testing, p-value, statistical significance, A/B testing, causal impact and inference, and metrics. These will prepare you for data scientist interview questions.

5. Is having a masterâs degree essential to work as a Data Scientist at FAANG?

Based on our research, you can work as a data scientist even though you only have a bachelorâs degree. You can always upgrade your skills via a data science boot camp. But for better career prospects, having an advanced degree may be useful.

What Is The Pareto Principle Used In Statistics

The Pareto principle used in Statistics is also called the 80/20 principle or 80/20 rule. This principle specifies that 80 per cent of the results are obtained from 20 per cent of the causes in an experiment.

For example, you will have observed in your real life that 80 per cent of the wheat comes from the 20 per cent of the wheat plants on a farm.

Recommended Reading: Diversity Equity And Inclusion Job Interview Questions

What Is The Difference Between Data Analysis And Machine Learning

Following is a list of key differences between data analysis and machine learning:

Data Analysis Machine Learning Data analysis is a process where we inspect, clean, transform, and model data to find useful information, informing conclusions, and support decision-making, which can enhance the decision-making process. Machine learning is mainly used to automate the entire data analysis workflow to provide deeper, faster, and more comprehensive insights. Data analysis requires a deep knowledge of coding and basic knowledge of statistics. On the other hand, machine learning requires a basic knowledge of coding and deep knowledge of statistics and business. We mainly focus on generating valuable insights from the available data in data analysis. Companies use the data analysis process to make better decisions regarding several matters such as marketing, production, etc. We mainly focus on studying algorithms that improve the overall user experience in machine learning. It is a subset of artificial intelligence that leverages algorithms to analyze huge amounts of data. Data analysis may require human intervention to inspect, clean, transform, and model data to find useful and trustworthy information. In machine learning, we use algorithms that learn from data automatically and apply the learning without human intervention.

What Is The Meaning Of Tf/idf Vectorization

TF-IDF is an acronym for Term Frequency â Inverse Document Frequency. It is used as a numerical measure to denote the importance of a word in a document. This document is usually called the collection or the corpus.

The TF-IDF value is directly proportional to the number of times a word is repeated in a document. TF-IDF is vital in the field of Natural Language Processing as it is mostly used in the domain of text mining and information retrieval.

Also Check: How To Have A Successful Job Interview

How Can Outlier Values Be Treated

You can drop outliers only if it is a garbage value.

Example: height of an adult = abc ft. This cannot be true, as the height cannot be a string value. In this case, outliers can be removed.

If the outliers have extreme values, they can be removed. For example, if all the data points are clustered between zero to 10, but one point lies at 100, then we can remove this point.

If you cannot drop outliers, you can try the following:

- Try a different model. Data detected as outliers by linear models can be fit by nonlinear models. Therefore, be sure you are choosing the correct model.

- Try normalizing the data. This way, the extreme data points are pulled to a similar range.

- You can use algorithms that are less affected by outliers an example would be random forests.

How Do You Stay Up

This is a commonly asked question in a statistics interview. Here, the interviewer is trying to assess your interest and ability to find out and learn new things efficiently. Do talk about how you plan to learn new concepts and make sure to elaborate on how you practically implemented them while learning.

If you are looking forward to learning and mastering all of the Data Analytics and Data Science concepts and earn a certification in the same, do take a look at Intellipaatâs latest Data Science with R Certification offerings.

Recommended Reading: How To Send Interview Thank You Email

What Is Root Cause Analysis In Statistics Can You Give An Example To Explain It

As the name suggests, root cause analysis is a method used in Statistics to solve problems by first identifying the root cause of the problem.

For example, If you see that the higher crime rate in a city is directly associated with the higher sales in a black-coloured shirt, it means that they have a positive correlation. However, it does not mean that one causes the other. Correlation is always tested using A/B testing or hypothesis testing.

What Is The Eligibility Criteria For This Data Science Certification

ThisData Science certification requires the following qualifications:

You May Like: How To Practice Sql For Interview

Preparing For A Data Scientist Interview

Its not uncommon for a data scientist applicant to go through three to five interviews for the role. This can include a phone interview, Zoom interview, in-person interview, and panel interview.

As you might expect, many of the interview questions will focus on your hard skills. However, you can also expect questions about your soft skills, as well as behavioral interview questions that assess both your hard and soft skills.

Heres how you can prepare for your data scientist interview.

Differentiate Between A Multi

|

Multi-label Classification |

Multi-Class Classification |

|

A classification problem where each target variable in the dataset can be labeled with more than one class. For Example, a news article can be labeled with more than two topics, say, sports and fashion. |

A classification problem where each target variable in the dataset can be assigned only one class out of two or more than two classes. For Example, the task of classifying fruits images where each image contains only one fruit. |

Also Check: Accounts Payable Processor Interview Questions

Q: Theres One Box Has 12 Black And 12 Red Cards 2nd Box Has 24 Black And 24 Red If You Want To Draw 2 Cards At Random From One Of The 2 Boxes Which Box Has The Higher Probability Of Getting The Same Color Can You Tell Intuitively Why The 2nd Box Has A Higher Probability

The box with 24 red cards and 24 black cards has a higher probability of getting two cards of the same color. Lets walk through each step.

Lets say the first card you draw from each deck is a red Ace.

This means that in the deck with 12 reds and 12 blacks, theres now 11 reds and 12 blacks. Therefore your odds of drawing another red are equal to 11/ or 11/23.

In the deck with 24 reds and 24 blacks, there would then be 23 reds and 24 blacks. Therefore your odds of drawing another red are equal to 23/ or 23/47.

Since 23/47 > 11/23, the second deck with more cards has a higher probability of getting the same two cards.

Probability Interview Problems Asked By Top

Recommended Reading: How To Email Thank You After Interview

Data Science Interview Questions And Answers

Data Science is not an easy field to get into. This is something all data scientists will agree on. Apart from having a degree in mathematics/statistics or engineering, a data scientist also needs to go through intense training to develop all the skills required for this field. Apart from the degree/diploma and the training, it is important to prepare the right resume for a data science job and to be well versed with the data science interview questions and answers.

Ace Your Next Job Interview with Mock Interviews from Experts to Improve Your Skills and Boost Confidence!

Consider our top 100 Data Science Interview Questions and Answers as a starting point for your data scientist interview preparation. Even if you are not looking for a data scientist position now, as you are still working your way through hands-on projects and learning programming languages like Python and R you can start practicing these Data Scientist Interview questions and answers. These Data Scientist job interview questions will set the foundation for data science interviews to impress potential employers by knowing about your subject and being able to show the practical implications of data science.

You Are Running For Office And Your Pollster Polled Hundred People Sixty Of Them Claimed They Will Vote For You Can You Relax

- Assume that theres only you and one other opponent.

- Also, assume that we want a 95% confidence interval. This gives us a z-score of 1.96.

z* = 1.96n = 100This gives us a confidence interval of . Therefore, given a confidence interval of 95%, if you are okay with the worst scenario of tying then you can relax. Otherwise, you cannot relax until you got 61 out of 100 to claim yes.

Don’t Miss: Java Coding Interview Questions For 10 Years Experience

How Can You Avoid Overfitting Your Model

Overfitting refers to a model that is only set for a very small amount of data and ignores the bigger picture. There are three main methods to avoid overfitting:

Data Scientist Master’s Program

Provide A Simple Example Of How An Experimental Design Can Help Answer A Question About Behavior How Does Experimental Data Contrast With Observational Data

Observational data comes from observational studies which are when you observe certain variables and try to determine if there is any correlation.

Experimental data comes from experimental studies which are when you control certain variables and hold them constant to determine if there is any causality.

An example of experimental design is the following: split a group up into two. The control group lives their lives normally. The test group is told to drink a glass of wine every night for 30 days. Then research can be conducted to see how wine affects sleep.

Also Check: What Do People Ask In Interviews

Additional Technical Data Scientist Technical Interview Questions

| Have you worked on a data science project that required a substantial programming component? What did you take away from the experience? |

| Describe how to effectively represent data with five dimensions. |

| You need to generate a predictive model using multiple regression. Whats your process for validating this model? |

| How do you ensure that the changes youre making to an algorithm are an improvement? |

| Please provide your method for handling an imbalanced data set thats being used for prediction . |

| Whats your approach to validate a model you created to generate a predictive model of a quantitative outcome variable using multiple regression? |

| You have two different models of comparable computational performance and accuracy. Please explain how you decide which to choose for production and why. |

| You are given a data set consisting of variables with a substantial portion missing values. Whats your approach? |