Explain Complex Mapping And Write Its Features

When a mapping contains a lot of requirements, which are based on too many dependencies, it is considered complex. Even with just a few transformations, a mapping can be complex it doesn’t need hundreds of transformations. When the requirement has a lot of business requirements and constraints, mapping becomes complex. Complex mapping also encompasses slowly changing dimensions. Complex mapping consists of the following three features.

- Large and complex requirements

- Several transformations

What Motivates You To Stay Up

There are a few reasons why this question might be asked. First, it allows the interviewer to gauge the level of interest that the developer has in keeping up with new technologies. This is important because it can be an indicator of how motivated the developer is to stay current with their skills. Second, it can give the interviewer insight into what motivates the developer to learn new things. This can be important because it can help the interviewer understand how the developer approaches new challenges and how they might handle change in the workplace.

Example: I am motivated to stay up-to-date with the latest technologies because I want to be able to provide the best possible solutions for my clients. I also believe that it is important to be able to keep up with the latest trends in the industry so that I can be better informed about what is happening in the market.

Mention Some Typical Use Cases Of Informatica

There are many typical use cases of Informatica, but this tool is predominantly leveraged in the following scenarios:

- When organizations migrate from the existing legacy systems to new database systems

- When enterprises set up their data warehouse

- While integrating data from various heterogeneous systems including multiple databases and file-based systems

- For data cleansing

Recommended Reading: How To Do A Good Phone Interview

Can You Enlist A Few Powercenter Client Applications With Their Basic Purpose

A popular interview question at Informatica. Have a look at the following applications to answer:

- Administration Console: Used to perform service tasks

- PowerCenter Designer: has several designing tools such as source analyzer, target designer, mapplet designer, mapping manager, etc.

- Workflow Manager: provides a set of instructions needed to execute mappings

- Workflow Monitor: Monitors workflows and tasks

- Repository Manager: An admin tool primarily used to manage repository folders, objects, and groups.

Differentiate Between Various Types Of Schemas In Data Warehousing

Star Schema

Star schema is the simplest style of data mart schema in computing. It is an approach that is most widely used to develop data warehouses and dimensional data marts. It features one or more fact tables referencing numerous dimension tables.

Snowflake SchemaA logical arrangement of tables in a multidimensional database, the snowflake schema is represented by centralized fact tables which are connected to multidimensional tables. Dimensional tables in a star schema are normalized using snowflaking. Once normalized, the resultant structure resembles a snowflake with the fact table in the middle. Low-cardinality attributes are removed, and separate tables are formed.

Fact Constellation Schema

Fact constellation schema is a measure of online analytical processing , and OLAP happens to be a collection of multiple fact tables sharing dimension tables and viewed as a collection of stars. It can be seen as an extension of the star schema.

Next up on this Informatica interview questions for freshers, we need to take a look at OLAP and its types. Read on.

Recommended Reading: How To Be Good In An Interview

What Does Mdm Stand For In Informatica

MDM is an abbreviation for master data management. It is a technique for handling all of an organizations data in a single, seamless system. Data in various forms collected from diverse data sources are utilized by MDM to assure data trustworthiness. Additionally, it is in charge of data projects, digital transformation, AI training, and decision-making using data analytics. All crucial data may be connected to the master file via master data management. After being properly established, MDM is in charge of distributing data throughout the company. MDM is employed as a successful data integration approach.

Informatica Interview Questions And Answers

Q1). How to Improve the Performance of Aggregators in Informatica?

Performance of the aggregator improves dramatically when records are sorted well before they are passed to the aggregator. Here, you can use Group by clause to sort records.

Q2). How to Delete Duplicate Rows Using Informatica?

For this purpose, you should check the Distinct option of the Source qualifier in the source table and load the target accordingly.

Q3). What is a Lookup Cache in Informatica?

To answer these Informatica Interview Questions:

Lookup Cache is either static or dynamic in nature. A static lookup cache cannot be modified once it is built and remains the same during the session run. A dynamic cache can be modified during the session run, and you can modify the database based on the incoming data source. A lookup cache can be either persistent or non-persistent based on whether Informatica retains the Cache even after the session run is complete or remains pending.

Q4). Is it Possible to Updating a Record in the target Table Without Using an Update Strategy?

Yes, it is possible updating a target table without using the update strategy. For this purpose, we should define the key first then connect the key with the respective field that you want to update.

Q5). How is Connected and Unconnected Lookup Differ from Each Other?

Connected Lookup:

Unconnected Lookup:

Q6). How are the Router and Filter Different from each other?

Here is the Answer to these Informatica Interview Questions

Router:

Read Also: How To Respond To Interview Rejection

What Is Olap And Write Its Type

The Online Analytical Processing method is used to perform multidimensional analyses on large volumes of data from multiple database systems simultaneously. Apart from managing large amounts of historical data, it provides aggregation and summation capabilities , as well as storing information at different levels of granularity to assist in decision-making. Among its types are DOLAP , ROLAP , MOLAP , and HOLAP .

What Is Md5 Function

MD5 is a hash function in Informatica which is used to evaluate data integrity. The MD5 function uses Message-Digest Algorithm 5 and calculates the checksum of the input value. MD5 is a one-way cryptographic hash function with a 128-bit hash value.MD5 returns a 32 characters string of hexadecimal digits 0-9 & a-f and returns NULL if the input is a null value.

Also Check: How To Interview For Culture Fit

Can We Store Previous Session Logs In Informatica If Yes How

Yes, that is possible. The automatically session logout will not overwrite the current session log if any session is running or active in timestamp mode.

The properties should be chosen as follows:

- Save session log by > SessionRuns

- Save session log for these runs > Set the number of log files you wish to keep

- When you want to save all log files generated by each run, you should choose the option Save session log for these runs > Session TimeStamp.

The properties listed above can be found in the session/workflow properties.

Highlight The Qualities You Needs To Be Successful In This Role

To succeed in this role, I need to demonstrate strong troubleshooting, analytical, and problem-solving skills. I must also exercise strong organizational, customer service, and communication skills. Additionally, I should have the ability to work independently and with a team and be attentive to details.

Please enable JavaScript

You May Like: How To Crack Amazon Sql Interview

What Is Meant By Regression Testing In Etl

Sample answer:

Regression testing is used after developing functional repairs to the data warehouse. Its purpose is to check if said repairs have impaired other areas of the ETL process.

Regression testing should always be performed after system modifications to see if they have introduced new defects.

What Are The Different Types Of Tasks

Different types of tasks include:

Don’t Miss: Sign Up For Interview Slots

What Are The Different Power Bi Tools And How Are They Used

Some of the common tools in Power BI are built-in connectors, Power query, AI-powered Q& A, Machine Learning models, quick insights, and integration of Cortana. The built-in connectors in Power BI allow the user to connect with both On-premises and On-cloud data sources including Salesforce, SQL Servers, Microsoft, and more.

Power Query helps you integrate the reports and share them on the internet. Cortana integration will allow you to run queries by giving voice commands. In addition, Power BI offers advanced analysis, Machine Learning, and other AI tools to create live dashboards and check your performance in real-time.

What Do You Mean By The Star Schema

The star schema comprises one or more dimensions and one fact table and is considered the simplest data warehouse schema. It is so-called because of its star-like shape, with radial points radiating from a center. A fact table is at the core of the star and dimension tables are at its points. The approach is most commonly used to build data warehouses and dimensional data marts.

You May Like: How To Send A Thank You Email For An Interview

Cracking Informatica Interview Questions

Cracking Informatica interview questions is not exactly rocket science. No doubt since the web world is rapidly evolving, there are more and more challenges in the Informatica field. And you need to understand that you will not be ever able to know it all. What you need to do is brush up on your foundation. Try to know and understand your basics. Clear up the basic know-how of Informatica and this will help you to get an upper hand in the Informatica interview. Also, be open and honest about what you know and what you dont know. This will give a clear picture of the mind of the interviewers. But you must display a learning attitude. Make sure that you always tell the interviewer that you are willing to learn new things and incorporate new skills which will further help you in your personal and professional growth.

Following is the list of some Informatica Interview Questions and their answers.

Why Is Etl Testing Important And How Can It Be Done

Sample answer:

Regular testing is an essential part of the ETL process and ensures that data arrives in the analytics warehouse smoothly and accurately.

ETL testing can be performed in the following ways:

- Review primary sources to make sure they have extracted without any data loss

- Verify that the data has been transformed into the appropriate data type for the warehouse

- Check that the warehouse accurately reports cases of invalid data

- Document any bugs that occur during the ETL process

Recommended Reading: Hr Manager Interview Questions Shrm

Questions About Experience And Background

Questions about experience and background allow an interviewer to learn about your past work that’s relevant to the position. Common experience and background questions include:

How long have you worked in data management?

Have you worked with Informatica before in a professional setting?

What’s the biggest challenge you’ve faced in data warehousing, and how did you solve it?

What kind of companies have you managed data for, and how were the experiences?

What’s the most-extensive data management project you’ve completed?

Have you ever experienced data loss in a data warehouse you manage? What caused it, and did you have backup procedures to recover it?

Tell me about a successful plan you created and executed at your former job and how it benefited the company.

How familiar are you with cloud-based storage for data management?

Do you have experience managing the changeover of a company’s data from local to cloud servers?

How has working with cloud databases benefited you in your work?

Related: 9 Data Virtualization Tools To Integrate Data

What Are Worklet And What Types Of Workouts

Reusable Worklet:

- Can be assigned to Multiple workflows.

Non-Reusable Worklet:

Recommended Reading: Social Media Manager Interview Assignment

What Attributes Does Informatica Developer 910 Have

- In the updated version, lookup can be set up as an active transformation that, in the event of a successful match, can return several rows.

- We can now also write SQL overrides for uncached lookup.Prior to now, we could only use a cached lookup.

- Control over our session log’s file size: In a real-time environment, we have some degree of control over the size or duration of our session log files.

- Database deadlock resistance feature: By using this, we may prevent an immediate session failure in the event of a database deadlock.The operation will be tried again.The number of retry attempts is configurable.

How Many Input Parameters Can Be Present In An Unconnected Lookup

The number of parameters that can include in an unconnected lookup is numerous. However, no matter how many parameters are put, the return value would be only one. For example, parameters like column 1, column 2, column 3, and column 4 can be put in an unconnected lookup but there is only one return value.

Don’t Miss: Best Way To Interview Someone

What Are The Different Ways To Implement Parallel Processing In Informatica

We can implement parallel processing using various types of partition algorithms:

Database partitioning: The Integration Service queries the database system for table partition information. It reads partitioned data from the corresponding nodes in the database.

Round-Robin Partitioning: Using this partitioning algorithm, the Integration service distributes data evenly among all partitions. It makes sense to use round-robin partitioning when you need to distribute rows evenly and do not need to group data among partitions.

Hash Auto-Keys Partitioning: The Powercenter Server uses a hash function to group rows of data among partitions. When the hash auto-key partition is used, the Integration Service uses all grouped or sorted ports as a compound partition key. You can use hash auto-keys partitioning at or before Rank, Sorter, and unsorted Aggregator transformations to ensure that rows are grouped properly before they enter these transformations.

Hash User-Keys Partitioning: Here, the Integration Service uses a hash function to group rows of data among partitions based on a user-defined partition key. You can individually choose the ports that define the partition key.

Key Range Partitioning: With this type of partitioning, you can specify one or more ports to form a compound partition key for a source or target. The Integration Service then passes data to each partition depending on the ranges you specify for each port.

What Do You Mean By Enterprise Data Warehouse

Data warehouses or Enterprise Data Warehousing , a form of the corporate repository, generally store and manage enterprise data and information collected from multiple sources. Enterprise data is collected and made available for analysis, business intelligence, to derive valuable business insights, and to improve data-driven decision-making. Data contained here can be accessed and utilized by users across the organization. With EDW, data is accessed through a single point and delivered to the server via a single source.

Read Also: How To Respond To Interview Questions

How Would You Describe Your Experience Working With The Informatica Platform

The interviewer is trying to gauge the candidate’s level of experience with the Informatica platform. This is important because it will help determine whether or not the candidate is a good fit for the position.

Example: I have worked with the Informatica platform for over 5 years now and it has been a great experience. The platform is very user-friendly and easy to use, which makes it perfect for ETL developers. The platform also offers a wide range of features and functionality, which makes it ideal for developing complex ETL solutions. Overall, I have found the Informatica platform to be an excellent tool for ETL development and would highly recommend it to anyone looking for a powerful and user-friendly ETL solution.

What Are The Points Of Difference Between Connected Lookup And Unconnected Lookup

Connected lookup is the one that takes up the input directly from the other transformations and also participates in the data flow. On the other hand, an unconnected lookup is just the opposite. Instead of taking the input from the other transformations, it simply receives the values from the result or the function of the LKP expression.

Connected Lookup cache can be both dynamic and static but unconnected Lookup cache can’t be dynamic in nature. The First one can return to multiple output ports but the latter one returns to only one output port. User-defined values which ads generally default values are supported in the connected lookup but are not supported in the unconnected lookup.

Don’t Miss: How Do You Ace A Job Interview

What Is Your Experience With Sql

An interviewer would ask “What is your experience with SQL?” to a/an Informatica Etl Developer in order to gauge their level of experience and expertise with the database programming language. SQL is an important skill for Informatica ETL Developers as it is used to query, update and manipulate data stored in databases. A strong understanding of SQL is essential for developers working with Informatica ETL tools, as it is used extensively in these tools for extracting, transforming and loading data.

Example: I have worked with SQL for over 5 years now. I am very familiar with its syntax and usage. I have also used it to create stored procedures and functions.

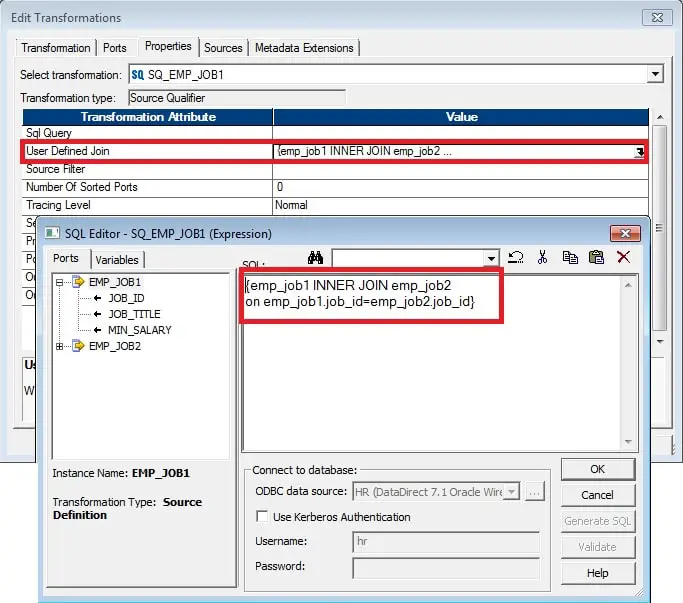

Name The Scenarios When You Should Opt For Joiner Transformation Instead Of Source Qualifier Transformation

Hereâs the answer to this common Informatice interview question:

When you are joining Source Data of heterogeneous sources or joining flat files, it is advisable to use Joiner transformation. For joining data from different Relational Databases, Flat Files, and joining relational sources and flat files, Joiner transformation is useful.

Also Check: How To Do A Case Interview